Human Brain vs. Computer: Unpacking the Analogy & Its Implications

Explore the enduring analogy of the human brain as a computer. This article delves into the historical use of technology to understand the mind, from water clocks to modern AI, questioning how we define intelligence.

Does the Human Brain Work Like a Computer? Unpacking the Most Profound Analogy of Our Time

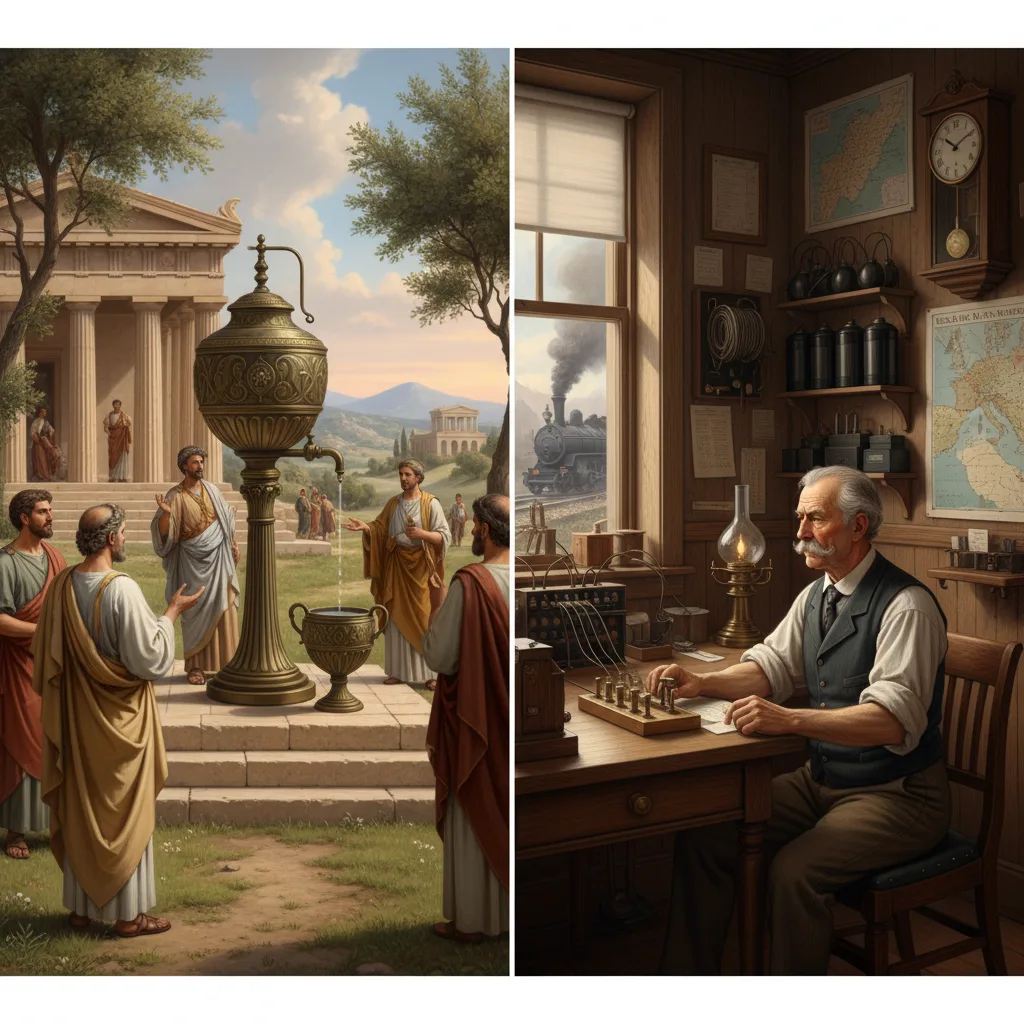

Imagine a world without analogies. How would we describe the indescribable, understand the complex, or illuminate the unknown? For centuries, humanity has grasped at metaphors drawn from the cutting edge of its technology to comprehend the ultimate frontier: the human mind. From ancient water clocks influencing theories of fluid humors to the telegraph inspiring thoughts of electrical impulses, our understanding of ourselves has always mirrored our most sophisticated inventions. Today, the reigning metaphor, the one that whispers in our scientific papers and daily conversations, is the computer. But the burning question remains: does the human brain work like a computer, or is this powerful analogy leading us down a captivating but ultimately misleading path? Let’s dive deep into the intricate machinery of thought and discover where the similarities dazzle and where the differences profoundly diverge.

The Allure of the Machine Metaphor: From Logic Gates to Neurons

The idea that our brains might operate like sophisticated machines isn’t new, but it gained unprecedented traction with the advent of the digital computer in the mid-20th century. Think back to the pioneering work of figures like Alan Turing, whose theoretical “Turing machine” laid the groundwork for modern computation, and John von Neumann, who articulated the architecture that still underpins most computers today. Suddenly, we had a tangible model for information processing: inputs, outputs, memory storage, and logical operations.

This new paradigm offered an irresistible framework for understanding cognition. Researchers in fields like cybernetics and artificial intelligence began to see the brain as a complex biological computer, its neurons acting as switches, its synapses as connections, and its thoughts as algorithms. The appeal was immense: if we could understand the brain as a machine, perhaps we could reverse-engineer intelligence itself, cure neurological diseases, or even upload consciousness. This powerful metaphor has shaped decades of research, driving both incredible breakthroughs and persistent philosophical debates. But how deep does this resemblance truly run?

The Architecture of Thought: Bits, Bytes, and Electrochemical Whispers

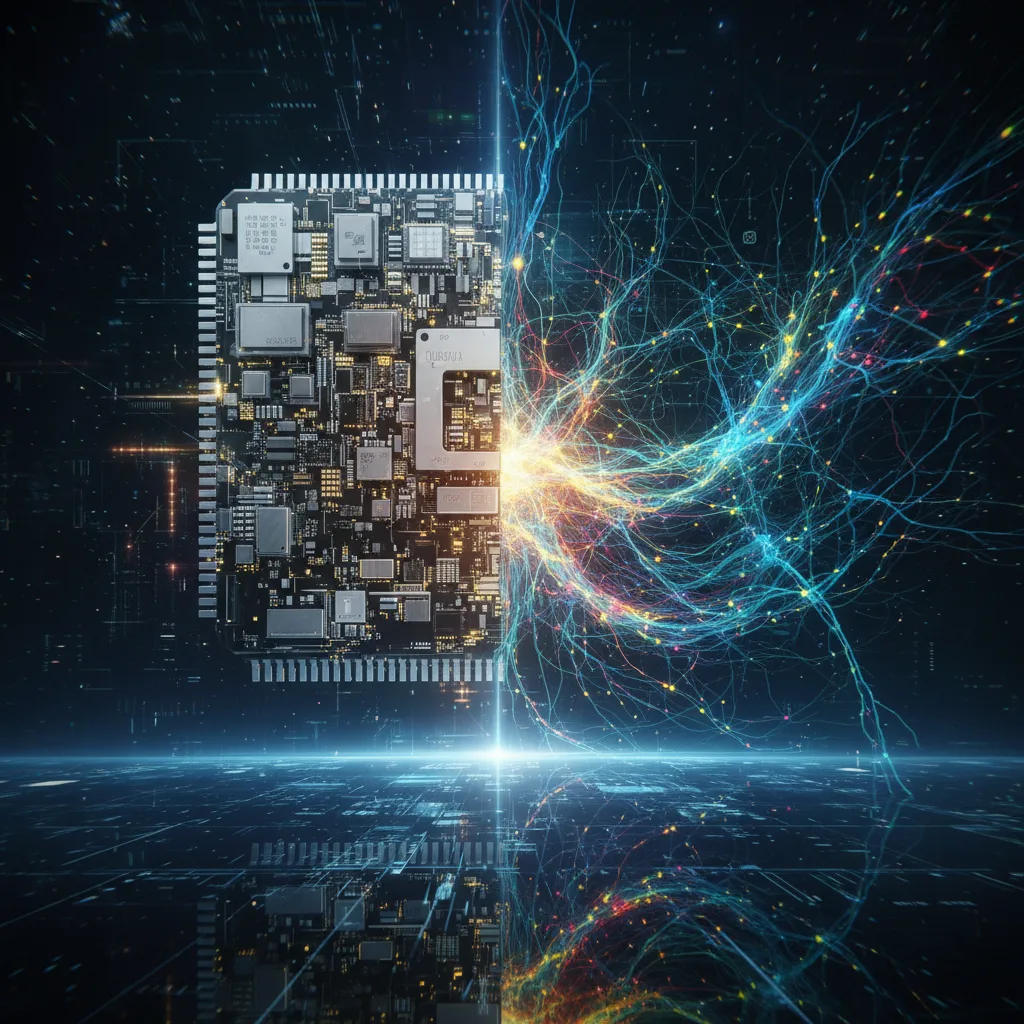

At a foundational level, the architecture of a computer and a brain appear strikingly different. A modern computer, whether it’s the smartphone in your pocket or a supercomputer filling a room, relies on digital processing. Its fundamental unit is the bit, representing either a 0 or a 1, a discrete on or off state. These bits are manipulated by billions of tiny transistors etched onto silicon chips, executing instructions with incredible precision and speed, all orchestrated by a Central Processing Unit (CPU) and stored in RAM or on a hard drive.

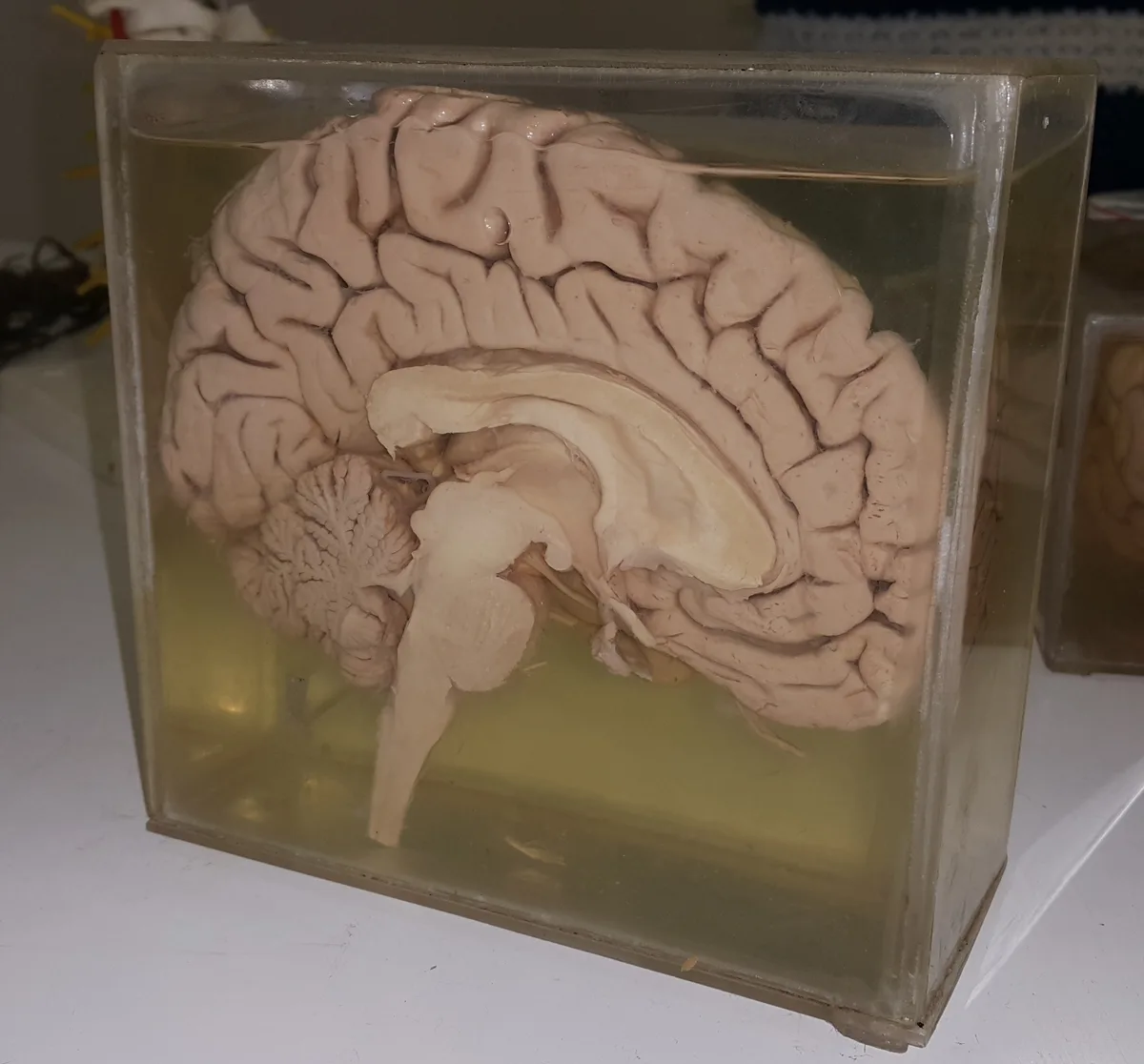

Contrast this with the brain’s “wetware.” Instead of silicon and electricity, we have an intricate network of approximately 86 billion neurons, each a living cell. These neurons communicate not through binary code, but through complex electrochemical signals called action potentials. When a neuron “fires,” it releases neurotransmitters across a tiny gap called a synapse, influencing the activity of thousands of other neurons. This process is inherently analog, continuous, and far more nuanced than a simple on/off switch. Each neuron isn’t just a switch; it’s a miniature, adaptable processor, constantly modulating its responses based on a myriad of incoming signals. The sheer scale and organic complexity of this network dwarf even the most powerful supercomputers.

Processing Power: The Symphony of Parallelism vs. Sequential Speed

When we talk about “processing power,” computers often seem to win hands down. A modern CPU can execute billions of instructions per second, performing complex calculations with blinding speed and unerring accuracy. They excel at sequential processing – tackling one problem after another in a linear fashion, crunching numbers, and sorting data with unparalleled efficiency.

Yet, the brain operates on an entirely different principle: massive parallelism. While individual neurons fire relatively slowly (in milliseconds, compared to nanoseconds for transistors), the brain compensates by engaging vast numbers of neurons simultaneously. This allows it to perform tasks that utterly stump even the fastest computers, such as instantly recognizing a familiar face in a crowd, understanding a complex sentence, or navigating a chaotic environment in real-time. This isn’t about raw speed, but about an unparalleled ability to integrate vast amounts of diverse information concurrently and adaptively. Furthermore, the brain isn’t a fixed architecture; it exhibits remarkable neuroplasticity, constantly rewiring and reorganizing its connections based on experience, a feat no current computer can truly replicate without explicit programming.

Memory: The Reconstructive Narrator vs. The Precise Archivist

The way our brains store and retrieve information is another critical divergence from the computer model. When you save a file on your computer, it’s stored in a specific, addressable location as an exact digital copy. Retrieval is precise and deterministic; the file you save is the file you get back, bit for bit. Computers excel at perfect recall of vast amounts of data.

Human memory, however, is a far more fluid and fascinating phenomenon. It’s not stored in a single “hard drive” but is distributed across various brain regions, with different aspects of a memory (visual, auditory, emotional) stored in different places. When we “recall” a memory, our brain doesn’t pull up an exact file; it actively reconstructs it, often filling in gaps, integrating new information, and even subtly altering details. This is why our memories are so susceptible to suggestion and why two people can have vastly different recollections of the same event. Our memories are deeply interwoven with our emotions, context, and current understanding, making them incredibly rich but also inherently fallible. The brain is less of a precise archivist and more of a creative, reconstructive narrator of our past.

The “Software” Problem: Consciousness, Learning, and the Spark of Creativity

Here lies perhaps the most profound chasm between brain and machine: the realm of the “software.” Computers run on algorithms – sets of instructions designed by humans to achieve specific tasks. Even advanced machine learning systems, while capable of “learning” from data, do so within predefined parameters and objectives set by programmers. Their “creativity” often involves generating novel combinations based on existing patterns, not truly original thought.

The human brain, on the other hand, gives rise to phenomena we still barely understand: consciousness, self-awareness, subjective experience (the feeling of “redness” or the taste of chocolate, known as qualia), intuition, and genuine creativity. We learn not just by processing data, but by exploring, questioning, and forming abstract concepts without explicit programming. Our capacity for moral reasoning, empathy, and the pursuit of meaning transcends any current computational model. The “hard problem of consciousness” – explaining how physical processes in the brain give rise to subjective experience – remains one of science’s greatest unsolved mysteries, a challenge that suggests the brain is doing something fundamentally different from merely running algorithms.

The Energy Equation: Powering Our Wetware with a Dim Bulb

Beyond the abstract differences in architecture and function, there's a stark, practical distinction that highlights the brain's unparalleled efficiency: energy consumption. A modern supercomputer, capable of performing calculations on par with some human cognitive tasks, consumes megawatts of electricity – enough to power a small town. These machines require massive cooling systems to prevent overheating.

Beyond the abstract differences in architecture and function, there's a stark, practical distinction that highlights the brain's unparalleled efficiency: energy consumption. A modern supercomputer, capable of performing calculations on par with some human cognitive tasks, consumes megawatts of electricity – enough to power a small town. These machines require massive cooling systems to prevent overheating.

Your brain, however, operates on a mere **20 watts of power** – roughly the energy needed to illuminate a dim lightbulb. Yet, within that remarkably efficient package, it manages to perform feats of perception, cognition, and creativity that no supercomputer can match. This incredible energy efficiency is a testament to billions of years of biological evolution, optimizing resource allocation and information processing within a living system. The brain uses glucose and oxygen to fuel its operations, a testament to its unique biological design, a stark contrast to the vast power grids required to feed our most advanced silicon counterparts.

Your brain, however, operates on a mere **20 watts of power** – roughly the energy needed to illuminate a dim lightbulb. Yet, within that remarkably efficient package, it manages to perform feats of perception, cognition, and creativity that no supercomputer can match. This incredible energy efficiency is a testament to billions of years of biological evolution, optimizing resource allocation and information processing within a living system. The brain uses glucose and oxygen to fuel its operations, a testament to its unique biological design, a stark contrast to the vast power grids required to feed our most advanced silicon counterparts.

Conclusion: Beyond the Binary – Why the Brain is More Than a Machine

So, does the human brain work like a computer? The answer, as with most profound questions, is nuanced. The computer analogy has been an invaluable tool, providing a conceptual framework that has propelled our understanding of information processing, memory systems, and neural networks. It helps us conceptualize the brain’s incredible capacity for computation and organization.

However, it’s crucial to acknowledge where the analogy breaks down, revealing the brain’s truly unique nature. The brain is not merely a faster, wetter, more complex computer. It is an analog, massively parallel, self-organizing, adaptive, and energy-efficient biological system that gives rise to emergent properties like consciousness, subjective experience, genuine creativity, and profound emotional depth. It doesn’t just process information; it experiences, feels, and understands.

While we continue to build increasingly sophisticated AI that can mimic human-like abilities, the fundamental differences in architecture, processing, memory, and the very essence of what it means to be conscious suggest that the brain operates on principles we are only beginning to grasp. The computer is a powerful tool, a mirror reflecting our own logical capabilities, but the brain remains an organic marvel, a universe of “wetware” that transcends the binary logic of our most advanced machines. It is, in essence, more than a machine; it is the very engine of what makes us human.

Key Takeaways:

- The computer analogy is a useful heuristic for understanding brain function, especially information processing.

- Brains are analog and massively parallel, while computers are digital and primarily sequential.

- Human memory is reconstructive and associative, not precise and addressable like computer memory.

- The brain exhibits emergent properties like consciousness, self-awareness, and genuine creativity, which current computers lack.

- The brain is incredibly energy-efficient, operating on a fraction of the power required by supercomputers.

- Ultimately, the brain is a biological system with unique properties that go beyond any current machine metaphor.

You might also like:

👉 The Human Brain: Unraveling Its Mysteries & How It Works

👉 Best Books on How the Human Brain Works: A Cognitive Guide

👉 Quantum Brain: Does Human Consciousness Operate at a Quantum Level?