Unmasking Online Bots: The X & Facebook Mimicry Challenge

Online bots are automated software programs that skillfully mimic human behavior on X, Facebook, and e-commerce sites, making detection incredibly hard.

Unmasking the Digital Puppet Masters: Detecting Online Bots

Forget the clunky robots you imagine. Internet bots are far more complex, often invisible. They skillfully mimic human behavior. This makes them incredibly hard to spot.

Bots are automated software programs. They quickly and repeatedly do specific tasks across online platforms. They live everywhere online. This includes social media sites like X and Facebook, e-commerce stores, customer service chats, and forums. Humans and bots constantly interact in these digital spaces, usually without knowing it. Researchers and platforms face a big challenge. They must tell real human activity from clever automated operations.

Bots are Everywhere

Nearly half of all internet traffic in 2023 came from automated bot activity. Imperva’s annual Bad Bot Report states this figure was 49.6%. This number rose significantly from previous years. These bots range from harmless programs to harmful ones.

Imagine online interactions like a busy coffee shop. Humans chat, order, and move around. Bots are like actors hired to fill seats and blend in. Some are obvious, like a person in a giant sandwich costume. Others are very convincing, just like real customers.

We track human-bot interactions online. This involves several steps. It means identifying if an interaction involves a bot. We also calculate how many bots are present and understand their purpose. This takes clever methods. It goes far beyond just looking for repetitive messages. Researchers at places like Indiana University’s Observatory on Social Media work on this.

Early Signs of Bots

Early bot detection used simple signature methods. This meant looking for predefined behavior patterns. For example, a bot might register an account with gibberish names like “asdfg123.” It might also post the same message across hundreds of accounts.

One clear sign is how much an account posts. A human can’t tweet 500 times in an hour. An account that posts too much suggests automation. Researchers at the University of Southern California showed these fast behaviors in early bot studies.

The Observatory on Social Media (OSoMe) at Indiana University is a leading research center dedicated to studying and combating misinformation, including the detection and characterization of online bots. Their work helps to unmask automated accounts that mimic human behavior across various digital platforms. (Source: news.iu.edu)

Time is another basic indicator. Bots often operate 24/7 without breaks. Humans, however, sleep and have varied online times. A consistent, non-stop posting schedule is a red flag for automated activity. The account’s geographic origin also gives clues. Many bots use virtual private networks (VPNs) to hide their location. This can cause weird logins from far-apart places within minutes.

Smart Ways to Spot Bots

Modern bot detection uses smart computer programs. These systems analyze huge amounts of data. They find subtle, non-obvious patterns. They look at hundreds of features at once. This is far more than any human could process. Cloudflare, a top internet security company, uses these techniques a lot.

Behavioral analytics is a key part. This studies how users interact with content and other users. A human might scroll, click different links, and spend varied times on pages. A bot often follows a predictable, efficient path. It might only click specific elements or ignore ads entirely.

Network analysis is another strong tool. This technique maps the connections between accounts. Bots often form dense, interconnected networks. Human users rarely create these. They might follow each other in unusual ways. Or they might share content at specific, coordinated times. A 2017 Science study by Filippo Menczer’s team showed this network-based detection.

Natural Language Processing (NLP) helps understand bot talk. Bots write text, but their language often lacks a human touch. They might use repetitive phrases, odd sentence structures, or fail to grasp sarcasm. Computer programs can learn to tell human writing from bot writing very well. These programs are always changing.

Bots Cause Real Problems

Bot activity has big real-world consequences. Bad bots disrupt everything from elections to financial markets. They break trust and twist what people think. The 2016 US presidential election had a lot of bot interference. Reports by the Office of the Director of National Intelligence detail this.

Bots twist public opinion. They spread misinformation and divisive content. They often make fringe views louder. A bot network can make a niche idea seem universally popular. This creates an echo chamber. It hardens people’s beliefs. Pew Research Center studies often show how social media affects political talk.

The 2016 US presidential election was a pivotal event significantly impacted by bot interference, with automated accounts spreading misinformation and divisive content, as detailed in reports by the Office of the Director of National Intelligence. (AI-generated illustration)

Economically, bots do a lot of damage. They do click fraud, making fake ad views for profit. They also do credential stuffing. This means trying to log into accounts with stolen passwords. The average cost of a data breach hit $4.45 million in 2023. IBM’s Cost of a Data Breach Report states this. Many breaches come from automated attacks.

Customer service bots can be helpful. But they also affect user experience. If a customer can’t tell a human from an automated helper, frustration can mount. Businesses want smooth interactions. They must ensure their legitimate chatbots are clearly identified. This keeps things clear and builds user trust.

The Fight for Real Online Life

The arms race between bot creators and bot detectors never stops. As detection methods get better, bot developers come up with cleverer mimicry. This constant change needs new ideas from security researchers and platform providers. We’ll see smarter automated bots.

Future detection will use biometric data and behavioral biometrics more. This means analyzing unique human interaction patterns. Things like typing speed, mouse movements, and even how a user holds their phone could become detection signals. These methods aim to create a unique “human fingerprint” for online activity.

Platforms like X (formerly Twitter) put a lot of money into bot detection. They constantly update their systems to fight spam and manipulation. The goal is to keep real human connection and trustworthy information alive. This effort is vital. It keeps platforms honest and users trusting them.

In the end, the internet’s realness needs teamwork. Researchers, tech companies, and policymakers must work together. They need to create standards and share info. This collective effort will help make sure the internet stays a place for real human interaction.

FAQ

Are all online bots bad? No, many bots are good. Customer service chatbots, search engine crawlers, and automated news aggregators are all useful. They make user experience better and give good services.

How do platforms like X (Twitter) detect bots? Platforms use several methods. These include analyzing how accounts are made, how often they’re active, their network connections, and the language in their posts. They use smart computer systems to spot suspicious behavior quickly.

The headquarters of X (formerly Twitter) in San Francisco, a company that invests heavily in advanced systems to detect and combat online bots, spam, and manipulation. This effort is crucial for maintaining authentic human interaction and trustworthy information on its platform. (Source: fortune.com)

Can I tell if I’m interacting with a bot? Sometimes, but it’s getting harder. Look for super-fast responses, repeated phrases, no personal context, or activity outside normal human hours. But clever bots can copy human conversation very well.

What’s the biggest challenge in bot detection? The biggest challenge is that bots are always changing. As detection gets better, bot creators adapt. They find smarter ways to copy human behavior. This creates a never-ending cat-and-mouse game.

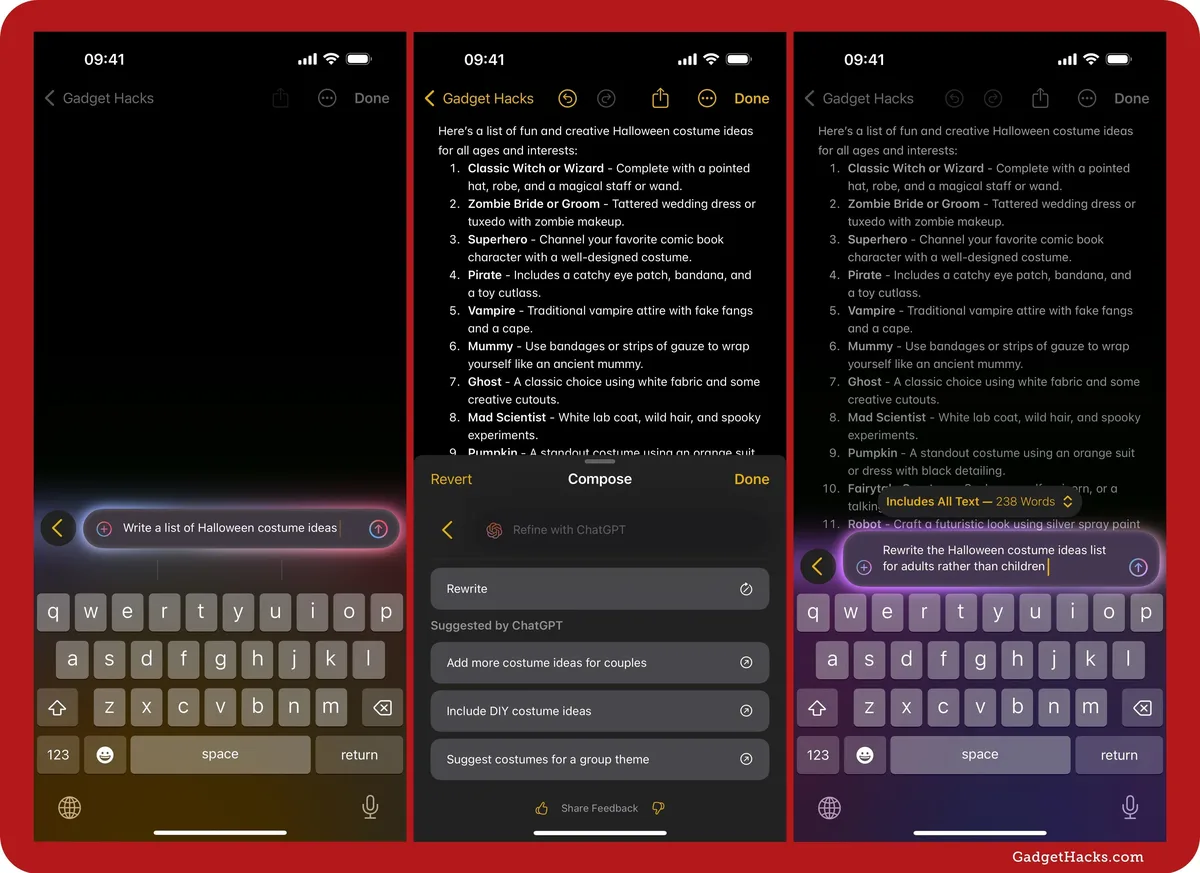

ChatGPT, developed by OpenAI, is a leading example of a 'clever bot' capable of generating highly human-like text, making it increasingly difficult to distinguish between human and AI interactions online. Its advanced conversational abilities underscore the ongoing 'cat-and-mouse game' in bot detection. (Source: apple.gadgethacks.com)

You might also like:

👉 The Unseen Revolution: Exploring Robotics in Everyday Life Examples

👉 Predicting Stock Market Trends: ML & Sentiment Analysis Guide

👉 Sustainable Futures: Investment, Cybersecurity & Future of Work