AI's UN Human Rights Challenge: Developers Miss Legal Duties

AI systems increasingly challenge human rights. Existing UN human rights laws already cover many concerns, making new ethical frameworks unnecessary.

The AI’s Human Rights Problem: What Developers Miss

AI systems increasingly challenge human rights. This often occurs without proper oversight. While “AI ethics” and “ethical AI principles” are widely discussed, these conversations frequently overlook the core issue. The issue is human rights, which are legally binding protections.

There is no need to invent new ethical frameworks for AI. Existing human rights laws already cover many concerns. AI systems use complex algorithms and vast data to make decisions. These decisions now touch every part of our lives. They determine loan approvals, hiring outcomes, and law enforcement flagging.

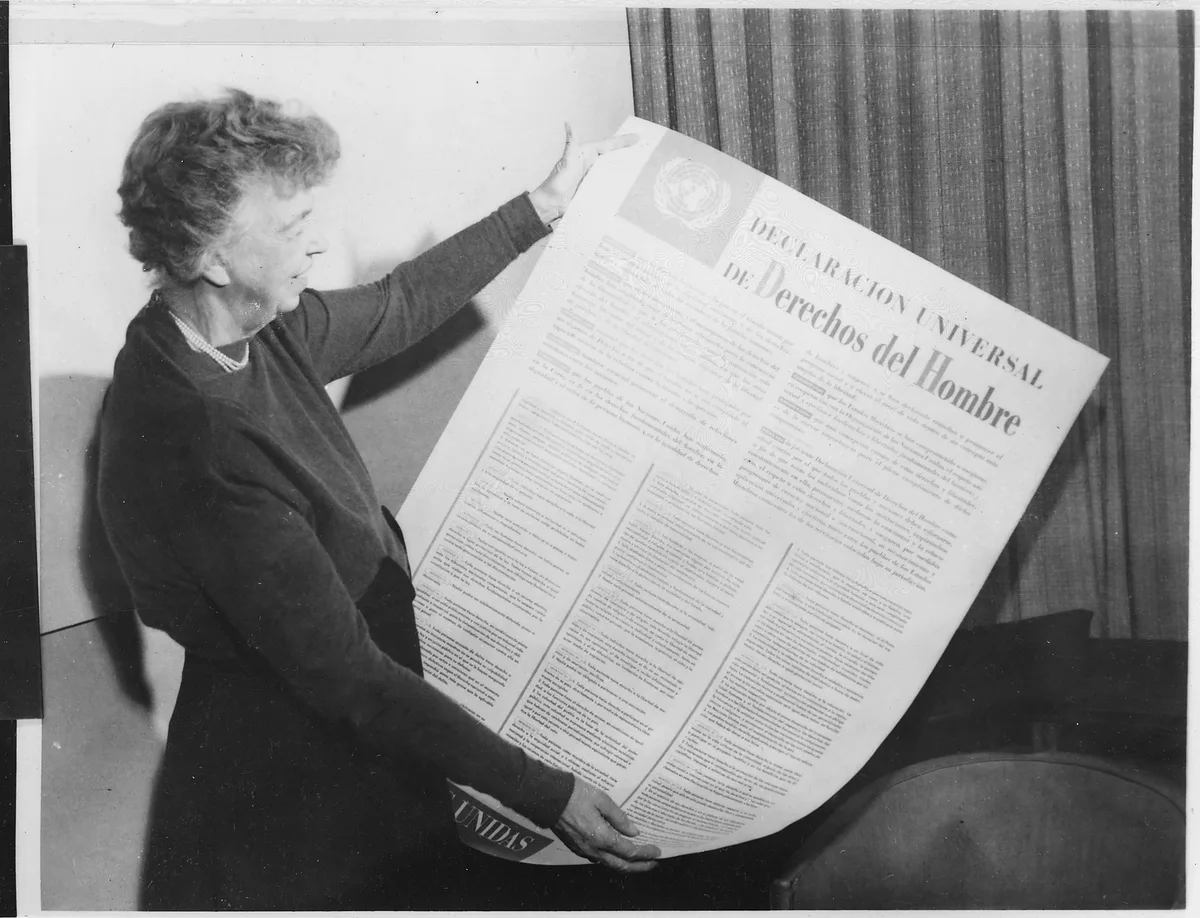

Human rights are universal legal guarantees. They were established after World War II. These rights protect everyone’s dignity, freedom, and equality. The UN General Assembly adopted the Universal Declaration of Human Rights in 1948. This declaration outlines key protections. These include privacy, non-discrimination, free expression, and due process. These are not suggestions. They are legal obligations for states. Increasingly, they are also obligations for companies.

Developers often focus on “bias mitigation” or “transparency” as ethical goals. These intentions are good. However, these goals do not always translate into enforceable safeguards. They fail to protect against human rights violations. Discussions about protecting human rights in the digital age are often missing from AI development talks.

AI’s invisible grip: How it harms our rights

In 2017, researcher Joy Buolamwini at MIT Media Lab exposed a serious problem. Many commercial facial recognition systems showed severe racial and gender bias. Her study, “Gender Shades,” found these systems were far less accurate. They struggled to identify women and people of color. This was not just an “ethical” issue. It directly impacted the right to non-discrimination.

Bias is not limited to facial recognition. AI systems are used in hiring, credit scoring, and criminal justice. In 2016, ProPublica reported on COMPAS. COMPAS is a risk assessment tool used in U.S. courts. It falsely flagged Black defendants as future criminals at twice the rate of white defendants. This directly undermines the right to a fair trial and equal treatment under the law.

Privacy rights are another major casualty. Consider Clearview AI. The company scraped billions of photos from the internet without consent. It then used them to build a vast facial recognition database. In 2022, the American Civil Liberties Union (ACLU) settled a lawsuit against Clearview AI. This settlement limited Clearview’s ability to sell its database to private companies. The case showed how easily AI systems can violate privacy on a massive scale.

Free expression and access to information also face threats. Content moderation algorithms are used by social media platforms. These algorithms often make mistakes. They remove legitimate speech or allow harmful content to spread. Researchers at the Electronic Frontier Foundation (EFF) documented how these systems lack transparency and accountability. Their decisions impact public discourse and individual liberties.

The regulatory gap: Where laws fall short

AI’s rapid development has outpaced legal safeguards. For decades, human rights protections mainly applied to state actions. Now, powerful tech companies wield immense influence. Their AI systems often operate globally. This means they cross national borders and legal jurisdictions.

Many tech companies issue their own ethical AI principles. Google published its AI Principles in 2018. Microsoft followed with its own guidelines. These commitments are voluntary. They often lack independent oversight or enforcement. This contrasts sharply with human rights. Human rights are protected by international and national laws.

The United Nations Human Rights Office (OHCHR) published a report in 2021. This report showed the disparity between voluntary guidelines and legal protections. It called for states to regulate AI using human rights laws. The report stated that voluntary guidelines are not enough to prevent abuses. It stressed the need for legal accountability.

Some regions are moving toward stronger regulation. The European Union passed the AI Act in March 2024. This landmark law categorizes AI systems by risk level. High-risk AI, such as those used in critical infrastructure or law enforcement, faces strict requirements. These requirements include data quality, human oversight, and transparency. This is a major step towards legally binding AI governance.

The European Union AI Act, passed in March 2024, is the world's first comprehensive law regulating artificial intelligence. It categorizes AI systems by risk level, imposing strict requirements on high-risk applications to protect fundamental rights. (AI-generated illustration)

The United Nations Educational, Scientific and Cultural Organization (UNESCO) also adopted a Recommendation on the Ethics of Artificial Intelligence in 2021. This global standard urges member states to develop national policies. These policies should align AI with human rights. However, a “recommendation” is not a legally binding treaty. Its effectiveness depends on national implementation.

Real consequences: Stories from the front lines

AI systems have led to human rights abuses. Consider migrant communities. In the Netherlands, an AI system called SyRI (System Risk Indication) identified welfare fraud. It disproportionately flagged people from lower-income neighborhoods. It also flagged those with non-Western backgrounds. A Dutch court ruled SyRI illegal in 2020. The system violated privacy and non-discrimination rights.

China’s extensive use of AI surveillance offers another clear example. The government deploys facial recognition and gait analysis to monitor its citizens. These systems are central to its social credit system. This system assigns scores based on individual behavior. It affects access to services, travel, and employment. Human Rights Watch documented how this technology enables mass surveillance. It also limits movement and expression.

In the United States, predictive policing tools are used in cities like Chicago. These algorithms identify individuals. They are believed to be at higher risk of committing or being victims of gun violence. A 2016 RAND Corporation study found little evidence these tools reduce crime. Instead, they often reinforce existing biases. This leads to over-policing in marginalized communities. It impacts rights to liberty and security.

AI systems in healthcare also show concerning biases. A 2019 Science study found a widely used algorithm for managing health care. This algorithm gave less care to Black patients. It used healthcare costs as a proxy for health needs. This continued existing racial disparities in healthcare access. It also violated the right to health. These examples prove the devastating impact of unchecked AI.

FAQ: AI ethics vs. human rights

What’s the main difference between AI ethics and human rights? AI ethics often refers to voluntary guidelines or principles for responsible AI development. Human rights are legally binding protections. They are guaranteed to all individuals under international and national law. Ethics are aspirational. Rights are enforceable.

The Dutch AI system SyRI (System Risk Indication) was ruled illegal by a court in 2020 for violating privacy and non-discrimination rights, having disproportionately flagged lower-income individuals and those with non-Western backgrounds for welfare fraud. (AI-generated illustration)

Do existing human rights laws apply to AI? Yes, absolutely. Human rights laws are technology-neutral. Examples include the Universal Declaration of Human Rights. They apply to any entity or technology that impacts an individual’s rights. This includes AI systems developed by companies or deployed by governments.

Who is responsible when AI violates human rights? Both AI system developers and the entities that deploy them can be held responsible. Governments must protect human rights. This includes regulating AI. Companies also have a responsibility to respect human rights throughout their operations.

What’s being done to address these concerns? Governments and international bodies are developing new laws and standards. The EU AI Act is a major example. Human rights organizations like Amnesty International and the OHCHR advocate for AI regulation. This regulation should be based on human rights.

Moving forward: Closing the gap

Human rights must be central to AI development. We cannot treat them as an afterthought. They must be the foundation. This requires moving beyond voluntary principles to legally binding laws.

Governments must mandate human rights impact assessments for all high-risk AI systems. These assessments should identify potential harms before deployment. Governments also need to establish clear accountability. Companies must be held liable for rights violations caused by their AI.

International cooperation is essential. AI is a global technology. We need global norms and standards. These standards will consistently protect human rights. The OHCHR’s work provides a strong starting point for these discussions.

We must also demand greater transparency from AI developers. Understanding how algorithms make decisions is vital. This helps identify and challenge bias. This empowers individuals to assert their rights. The future of human rights in an AI-driven world depends on us. We must choose to prioritize people over unchecked technology. Let’s make sure this happens.

The Universal Declaration of Human Rights, adopted by the United Nations General Assembly in 1948, is a landmark document that outlines the fundamental human rights to be universally protected. Its principles are considered technology-neutral, meaning they apply to AI systems just as they do to any other entity or technology. (Source: newint.org)

You might also like:

👉 Unmasking Online Bots: The X & Facebook Mimicry Challenge

👉 Zuckerberg’s 2021 Meta: Why Your Virtual Self Isn’t Free

👉 Sustainable Futures: Investment, Cybersecurity & Future of Work